Thursday, December 22, 2022

More Python Everywhere, All at Once: Looking Forward to 2023

Supporting Membership is a particularly great way to contribute to the PSF. By becoming a Supporting Member, you join a core group of PSF stakeholders, and since Supporting Members are eligible to vote in our Board and bylaws elections, you gain a voice in the future of the PSF. And we have just introduced a new sliding scale rate for Supporting Members, so you can join at the standard rate of an annual $99 contribution, or for as little as $25 annually if that works better for you. We are about three quarters of the way to our goal of 100 new supporting members by the end of 2022 – Can you sign up today and help push us over the edge?

Thank you for reading and for being a part of the one-of-a-kind community that makes Python and the PSF so special.

With warmest wishes to you and yours for a happy and healthy new year,

Deb

Thursday, December 08, 2022

Introducing a New Sliding Scale Membership

Part of our mission at the PSF is to “facilitate the growth of a diverse and international community of Python programmers.” Our community Grants Program and our Travel Grants for PyCon US have paved the way for a lot of growth, both internationally and amongst populations that are otherwise under-indexed in Python specifically, and open source generally. One area where this outreach hasn’t “trickled up” as much as we’d like is our leadership. Supporting Members can vote for our Board of Directors. If our roster of voting members can more accurately represent the entire Python community, then we can more reasonably expect the make-up of the Board to follow.

There are of course many other things we could consider doing to increase the diversity of the Python community. We welcome your thoughts on how we can continue to improve.

In the meantime, our annual membership and fundraising drive is happening right now and we hope that you will consider becoming a Supporting Member at $25, $99, or anything in between (or more if you have the means.) A second task you could do to support our membership drive is to share it on social media or with your friends and colleagues who use Python. We prefer to rely on word of mouth, rather than purchasing leads or haunting people with ads all over the internet, so thanks in advance for your help!

Wednesday, November 23, 2022

Where is the PSF?

Where to Find the PSF Online

One of the main ways we reach people for news and information about the PSF and Python is Twitter. There’s been a lot of uncertainty around that platform recently, so we wanted to share a brief round up of other places you can find us:

- Read our blog: It’s here! You found it! You can always find our latest updates here at pyfound.blogspot.com.

- Subscribe to our newsletter: We send out an email newsletter about every other month. You can sign up here: https://www.python.org/psf/newsletter/

- Follow us on LinkedIn: https://www.linkedin.com/company/python-software-foundation

- Follow us on Mastodon: https://fosstodon.org/@thepsf

- We're still on Twitter: https://twitter.com/ThePSF

As always, if you are looking for technical support rather than news about the foundation, we have collected links and resources here for people who are new or looking to get deeper into the Python programming language: https://www.python.org/about/gettingstarted/

You can also ask questions about Python or the PSF at discuss.python.org

Where to Find PyCon US Online

Here’s where you can go for updates and information specific to PyCon US:

- Read the PyCon US blog: https://pycon.blogspot.com/

- Subscribe to the PyCon US Newsletter. We send out an email newsletter about four times a year, during the run up to PyCon US. You can sign up here: bit.ly/3ElTPzv

- Follow PyCon US on Mastodon: https://fosstodon.org/@pycon

- Follow PyCon US on Twitter: https://twitter.com/PyCon

Thank you for keeping in touch, and see you around the Internet!

Monday, November 07, 2022

It's time for our annual year-end PSF fundraiser and membership drive 🎉

Support Python in 2022!

For the fourth year in a row, the PSF is partnering with JetBrains on our end-of-year fundraiser. Over that time, the partnership has raised a total of over $75,000. Wow! Thank you, JetBrains, for all your support.

There are three ways to join in the drive this year:

- Save on PyCharm + get DataSpell free! JetBrains is once again supporting the PSF by providing a 30% discount on PyCharm and all proceeds will go to the PSF! But wait–there’s more! JetBrains has released DataSpell, a new IDE specifically for Data Scientists. When you buy your discounted PyCharm license, you will also get a bonus DataSpell license, free!

You can take advantage of this discount by clicking the button on the page linked here, and the discount will be automatically applied when you check out. The promotion will only be available through November 22nd, so go grab the deal today! - Donate directly to the PSF! Every dollar makes a difference. (Does every dollar also make a kitten somewhere purr? We make no promises, but maybe you should try, just in case?😻)

- Become a member! Sign up as a Supporting member of the PSF. Be a part of the PSF, and help us sustain what we do with your annual support.

Or, heck, why not do all three? 🥳

Tuesday, November 01, 2022

Thank You for Making PyCon US amazing, Jackie!

Jackie says, “PyCon US will forever be in my heart. Mostly because of all the wonderful people I have met and come to love. The community members, the board directors, the many volunteers, and especially the staff of the PSF have enhanced my life tremendously. I will truly miss everyone.”

Jackie has been working with the PSF on PyCon US for 10 years and most recently managed our pivot to two years of remote PyCons. She also oversaw our return to a safe and fulfilling in-person PyCon earlier this year. PyCon US would not be the successful and growing event it is today without her work.

The PSF’s Director of Infrastructure Ee Durbin shared, “For most PyCon US is a few precious days in the spring each year. For some it is months of preparing a presentation, organizing a summit, or volunteering their time. For Jackie it has been a continuous and heartfelt commitment year over year to facilitate every detailed aspect of the conference, both in-person and online, that we cherish. The impact Jackie has had on our community via PyCon US is hard to overstate and our gratitude should be as deep and heartfelt as the commitment she made in her time directing the event.”

So, what’s next for us? Our extremely capable Event Assistant, Olivia Sauls will be stepping up into the role of Program Director and we will be hiring a Community Events Manager to help us run PyCon US. It would be lovely if you could help us by sharing the job listing widely.

Wednesday, October 26, 2022

Announcing Python Software Foundation Fellow Members for Q3 2022! 🎉

The PSF is pleased to announce its third batch of PSF Fellows for 2022! Let us welcome the new PSF Fellows for Q3! The following people continue to do amazing things for the Python community:

Thank you for your continued contributions. We have added you to our Fellow roster online.

The above members help support the Python ecosystem by being phenomenal leaders, sustaining the growth of the Python scientific community, maintaining virtual Python communities, maintaining Python libraries, creating educational material, organizing Python events and conferences, starting Python communities in local regions, and overall being great mentors in our community. Each of them continues to help make Python more accessible around the world. To learn more about the new Fellow members, check out their links above.

Let's continue recognizing Pythonistas all over the world for their impact on our community. The criteria for Fellow members is available online: https://www.python.org/psf/fellows/. If you would like to nominate someone to be a PSF Fellow, please send a description of their Python accomplishments and their email address to psf-fellow at python.org. We are accepting nominations for quarter 4 through November 20, 2022.

Are you a PSF Fellow and want to help the Work Group review nominations? Contact us at psf-fellow at python.org.

Tuesday, October 11, 2022

Join the Python Developers Survey 2022: Share and learn about the community

This year we are conducting the sixth iteration of the official Python Developers Survey. The goal is to capture the current state of the language and the ecosystem around it. By comparing the results with last year’s, we can identify and share with everyone the hottest trends in the Python community and the key insights into it. We encourage you to contribute to our community’s knowledge. The survey should only take you about 10-15 minutes to complete.

Contribute to the Python Developers Survey 2022!

The survey is organized in partnership between the Python Software Foundation and JetBrains. After the survey is over, we will publish the aggregated results and randomly choose 20 winners (among those who complete the survey in its entirety), who will each receive a $100 Amazon Gift Card or a local equivalent.

Thursday, July 21, 2022

Distinguished Service Award Granted to Naomi Ceder

The PSF’s Distinguished Service Award (DSA) is granted to individuals who make sustained exemplary contributions to the Python community. Each award is voted on by the PSF Board and they are looking for people whose impact has positively and significantly shaped the Python world. Naomi’s work with the Python community very much exemplifies the ethos of “build the community you want to see.” She seems to particularly enjoy taking on the hardest parts, getting a new initiative started and figuring out how to take an idea from the drawing board to a regular activity that we can’t imagine leaving out.

After receiving the award Naomi shared, "I'm so grateful for the recognition, and even more grateful for all of the support that our community has given me over the years. I'm excited to see a new generation of Python volunteers continue the work to make our community and the PSF more global and inclusive, and I'm looking forward to working with smaller communities as they grow and develop."

Over the years Naomi has taken on many leadership roles to make PyCon US successful and welcoming. She served as Chair of the Hatchery Program and she helped found PyCon Charlas, our Spanish language track. At different points in time, she’s also been the Co-chair of Sprints, an Organizer of the PyCon Education Summit and Chair for poster sessions at PyCon US. She also co-founded Trans*Code, an on-going series of hackdays (mostly) in the UK, which aims to build community and foster tech education and skills for transgender and non-binary folks. PyCon US and the global Python community would not look like it does without her tireless, largely behind the scenes work. Her deep thoughtfulness coupled with her energy is an immeasurable gift to the Python community.

Curious about previous recipients of the DSA or wondering how to nominate someone? We got you.

Wednesday, July 06, 2022

Announcing Python Software Foundation Fellow Members for Q2 2022! 🎉

The PSF is pleased to announce its second batch of PSF Fellows for 2022! Let us welcome the new PSF Fellows for Q2! The following people continue to do amazing things for the Python community:

Thank you for your continued contributions. We have added you to our Fellow roster online.

The above members help support the Python ecosystem by being phenomenal leaders, sustaining the growth of the Python scientific community, maintaining virtual Python communities, maintaining Python libraries, creating educational material, organizing Python events and conferences, starting Python communities in local regions, and overall being great mentors in our community. Each of them continues to help make Python more accessible around the world. To learn more about the new Fellow members, check out their links above.

Let's continue recognizing Pythonistas all over the world for their impact on our community. The criteria for Fellow members is available online: https://www.python.org/psf/fellows/. If you would like to nominate someone to be a PSF Fellow, please send a description of their Python accomplishments and their email address to psf-fellow at python.org. We are accepting nominations for quarter 3 through August 20, 2022.

Are you a PSF Fellow and want to help the Work Group review nominations? Contact us at psf-fellow at python.org.

Friday, July 01, 2022

Board Election Results for 2022!

Congratulations to everyone who won a seat on the PSF Board! We’re so excited to work with you. New and returning Board Directors will start their three year terms this month at the next PSF board meeting. Thanks to everyone else who ran this year and added their voice to the conversation about the future of the Python community. It was a tough race with many amazing candidates.

- Kushal Das

- Jannis Leidel

- Dawn Wages

- Simon Willison

Thanks to everyone who voted and helped us spread the word! We really appreciate that so many of you took the time to participate in our elections this year. By the numbers, we sent ballots to 1,459 voting PSF members who then chose among 26 nominees. By the end 578 votes were cast, well over the one third required for quorum.

While the board elections are over, there are lots of other ways to get involved with the Python community. Here are a few things you can get started with or you could consider serving with one of our working groups. If you want to start participating on the technical side of things, you might want to check out our forum. Next year’s board elections will happen at approximately the same time in 2023. If you want to make sure you are notified via email, join the psf-vote mailing list.

Sunday, June 19, 2022

The PSF Board Election is Open!

It’s time to cast your vote! Voting takes place from Monday, June 20 AoE, through Thursday, June 30, 2022 AoE. Check here to see how much time you have left to vote. If you are a voting member of the PSF, you will get an email from “Helios Voting Bot <no-reply@mail.heliosvoting.org>” with your ballot, subject line will read “Vote: Python Software Foundation Board of Directors Election 2022”. If you haven’t seen your ballot by Tuesday, please 1) check your spam folder for a message from “no-reply@mail.heliosvoting.org” and if you don’t see anything 2) get in touch by emailing psf-elections@python.org so we can make sure we have the most up to date email for you.

This might be the largest number of nominees we’ve ever had! Make sure you schedule some time to look at all their statements. We’re overwhelmed by how many of you are willing to contribute to the Python community by serving on the PSF board.

Who can vote? You need to be a Contributing, Managing, Supporting, or Fellow member as of June 15, 2022 to vote in this election. Read more about our membership types here or if you have questions about your membership status please email psf-elections@python.org

Thursday, June 09, 2022

The PSF's 2021 Annual Report

2021 was a challenging and exciting year for the PSF. We’ve done our best to capture some of the key numbers, details, and context in our latest annual report. Some highlights of what you’ll find in the annual report include:

- Letter from our outgoing Board Chair Lorena Mesa

- Personal reflection on the PSF’s 20th anniversary from our outgoing interim general manager and longtime community member Thomas Wouters

- Note from our new Executive Director Deb Nicholson

- Account by Łukasz Langa on what he’s accomplished and learned in his first year as the inaugural PSF Developer in Residence

- PyCon US 2021 by the numbers

- Where we sent grant funding in 2021

- Summary of our 2021 financials

- Great photos plus testimonials from some of the small number of live events the PSF supported in 2021, generously shared by these groups who are doing great work for the Python community around the world:

- PyCon Tanzania with thanks to Noah Mukhangu for sharing;

- Python Workshops for Girls & Teachers Spoonbill Nest Innovation Center, Albania with thanks to Terena Cardwell; and

- Afrodjango Initiatives, Uganda with thanks to Joshua Kato.

- Sponsors who generously supported our work and the Python ecosystem in 2021

We’d love for you to take a look, and if you do, let us know what you think! You can comment here, tweet at us, or share your thoughts on discuss.python.org.

Wednesday, June 08, 2022

PyCon US: Successful Return to In-Person in 2022

We held our first in-person event since 2019 in Salt Lake City last month and it was well-attended, celebratory, and safe. We had 1,753 in-person attendees and 669 online attendees. Of the in-person attendees, 1,153 were attending their first PyCon US ever – we hope they’ll all be back! In-person attendees were masked and we took care to add extra space to the expo hall, the dining areas, the session rooms and the job fair. For many community members, this was their first in-person conference or community event of any type in almost 3 years. There were a LOT of hugs and some very enthusiastic -- elbow bumps.

You can take a look at PyCon 2022 by the numbers here.

Having joined the PSF as Executive Director just a few weeks before the event, this was a great opportunity to meet the community, including long-time volunteers, current and former board members, and hard-working local Python organizers from all over the world. It was also my first opportunity to meet the amazing PSF staff in person. Did you know that the PSF facilitates PyCon US with just 8 staff members? Their dedication to providing a space that is welcoming and fun, while also being safe and respectful, knows no bounds. The community is always first at PyCon US.

We had lots of great talks and convened summits focused on Maintainers, Typing, Education, Packaging and the development of the Python Language. We also hosted Mentored Sprints for Diverse Beginners, the PyLadies Auction, four Lightning Talk Sessions, and two days of Sprints. We hit a few snags with a new AV team this year, but now most of the videos are up on our YouTube channel.

This year’s event was a little more cautious, but it was really nice to see people. We’re actively looking for ways to better engage our online attendees next year and would welcome your ideas. Thanks to our many, many volunteers, especially three-time PyCon US Chair Emily Morehouse! Thank you also to our wonderful sponsors, many of whom not only help us put on PyCon US but also support the Python Programming Language throughout the year!!

Mark your calendar for PyCon US 2023 from April 19th to April 27th, 2023 both in Salt Lake City or online. If you are interested in talking about sponsorship opportunities for 2023, please drop us a line. And we are always looking for more volunteers, so if you’d like to be part of a future event as a volunteer, just let us know.

Tuesday, June 07, 2022

Welcome Chloe Gerhardson to the PSF staff!

With great anticipation and excitement we are happy to announce that Chloe Gerhardson (she/her) has joined the Python Software Foundation (PSF) as of Monday May 23, 2022. Chloe joins the team as Infrastructure Engineer, led by PSF Director of Infrastructure Ee Durbin.

|

| Chloe Gerhardson - PSF Infrastructure Engineer |

As a recent graduate of Springfield Technical Community College’s associates program in Computer Programming, Chloe will be growing into a role that supports the wide gamut of software technologies that facilitate the technical aspects of our community.

In time Chloe will share in all responsibilities of the Infrastructure Team and help the PSF move its infrastructure commitment to the community from a reactive and maintenance stance towards progress on new and expanded services to fulfill our mission.

In her own words…

I am thrilled and grateful to be brought along in the journey of growing and maintaining the PSF’s infrastructure, and I look forward to working with the PSF’s incredible staff members, as well as our wonderful community members at large.

Although excitement abounds for the learning, growing, and doing that this role requires Chloe also stays busy as a committed, registered, and active Registered Yoga Teacher in her community, skateboarder, dog lover, traveler, and aficionado of live music.

Please join us in welcoming Chloe!

Friday, June 03, 2022

Python Developers Survey 2021: Python is everywhere

Python is being used by the vast majority (84%) of survey respondents as their primary language and by many others as just one more tool in their box. Interestingly, only 29% of the Python developers involved in data analysis and machine learning consider themselves to be Data Scientists. And like past years, many use Python in conjunction with other popular development and data tools like SQL, Jupyter Notebook, Virtualenv, Docker or with the most popular web development technologies including Django, Javascript and HTML.

Results of the 2021 Python Developers Survey

This is also the first time we have packaging information in the survey. It was interesting to see the wide range of tools that Python users are employing for their packaging tasks. The PSF is currently focusing on improving the packaging ecosystem and building a roadmap for the future of packaging. We hope you will continue sharing your opinions with us on this part of your work so that we can continue improving.

Here's a link to the raw data, if you want to go deeper.

If you do look at the raw data and discover things you think the community would be interested in, we hope you’ll let us know. Please share your thoughts on Twitter or other social media, mentioning @jetbrains and @ThePSF with the #pythondevsurvey hashtag. We are also open to any suggestions and feedback related to this survey which could help us run an even better one next time. You are also encouraged to open issues here or join the conversation on our forum.

Wednesday, June 01, 2022

PSF Board Election Dates for 2022

Board elections are a chance for the community to help us find the next batch of folks to help us steer the PSF. This year there are 4 seats open on the PSF board. You can see who is on the board currently here. (Kushal, Jannis, Lorena and Marlene are at the end of their current terms.) Nominations for new board members opens today!

Timeline:

- Nominations are open, Wednesday, June 1st 12:00 PM Eastern

- Board Director Nomination cut-off: Wednesday, June 15, 2022 AoE

- Voter application cut-off date: Wednesday, June 15, 2022 AoE

- Announce candidates: Thursday, June 16th

- Voting start date: Monday, June 20, 2022 AoE

- Voting end date: Friday, June 30, 2022 AoE

Nominations should be made through this form (Note: you will need to sign into or create your python.org user account first). You can nominate yourself or someone else, but no one will be forced to run, so you may want to consider reaching out to someone before nominating them.

You need to be a contributing, managing, supporting, or fellow member by June 15th to vote in this election. Learn more about membership here or if you have questions about membership or nominations please email psf-elections@python.org

You are welcome to join the discussion about the PSF Board election on our forum or come find us in Slack.

Thursday, May 26, 2022

PyCon JP Association Awarded the PSF Community Service Award for Q4 2021

|

| The PyCon JP Association team from left to right. Takayuki, Shunsuke, Jonas, Manabu, Takanori |

The PyCon JP Association team was awarded the 2021 Q4 Community Service Award.

RESOLVED, that the Python Software Foundation award the 2021 Q4 Community Service Award to the following members of the PyCon JP Association for their work in organizing local and regional PyCons, the work of monitoring our trademarks, and in particular organizing the "PyCon JP Charity Talks" raising more than $25,000 USD in funds for the PSF: Manabu Terada, Takanori Suzuki, Takayuki Shimizukawa, Shunsuke Yoshida, Jonas Obrist.

We interviewed Jonas Obrist on behalf of the PyCon JP Association to learn more about their inspiration, their work with the Python community, and supporting the Python Software Foundation - PSF. Débora Azevedo also contributed to this article, by sharing more light on the PyCon JP Association's efforts and commitment to the Python community.

Jonas Obrist Speaks on the motivation behind the PyCon JP Association

Débora Azevedo speaks about the impact and significance of the PyCon JP Association

Wednesday, May 11, 2022

The 2022 Python Language Summit: Achieving immortality

What does it mean to achieve immortality? At the 2022 Python Language Summit, Eddie Elizondo, an engineer at Instagram, and Eric Snow, CPython core developer, set out to explain just that.

Only for Python objects, though. Not for humans. That will have to be another PEP.

Objects in Python, as they currently stand

In Python, as is well known, everything is an object. This means that if you want to calculate even a simple sum, such as 194 + 3.14, the Python interpreter must create two objects: one object of type int representing the number 194, and another object of type float representing the number 3.14.

All objects in Python maintain a reference count: a running total of the number of active references to that object that currently exist in the program. If the reference count of an object drops to 0, the object is eventually destroyed (through a process known as garbage collection). This process ensures that programmers writing Python don’t normally need to concern themselves with manually deleting an object when they’re done with it. Instead, memory is automatically freed up.

The need to keep reference counts for all objects (along with a few other mutable fields on all objects) means that there is currently no way of having a “truly immutable” object in Python.

This is a distinction that only really applies at the C level. For example, the None singleton cannot be mutated at runtime at the Python level:

>>> None.__bool__ = lambda self: True

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

AttributeError: 'NoneType' object attribute '__bool__' is read-only

However, at the C level, the object representing None is mutating constantly, as the reference count to the singleton changes constantly.

Immortal objects

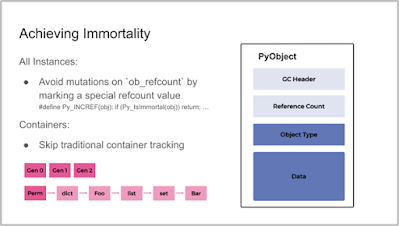

An “immortal object”, according to PEP 683 (written by Elizondo/Snow), is an object marked by the runtime as being effectively immutable, even at the C level. The reference count for an immortal object will never reach 0; thus, an immortal object will never be garbage-collected, and will never die.

“The fundamental improvement here is that now an object can be truly immutable.”

– Eddie Elizondo and Eric Snow, PEP 683

The lack of truly immutable objects in Python, PEP 683 explains, “can have a large negative impact on CPU and memory performance, especially for approaches to increasing Python’s scalability”.

The benefits of immortality

At their talk at the Python Language Summit, Elizondo and Snow laid out a number of benefits that their proposed changes could bring.

Guaranteeing “true memory immutability”, Elizondo explained, “we can simplify and enable larger initiatives,” including Eric Snow’s proposal for a per-interpreter GIL, but also Sam Gross’s proposal for a version of Python that operates without the GIL entirely. The proposal could also unlock new optimisation techniques in the future by helping create new ways of thinking about problems in the CPython code base.

The costs

A naive implementation of immortal objects is costly, resulting in performance regeressions of around 6%. This is mainly due to adding a new branch of code to the logic keeping track of an object’s reference count.

With mitigations, however, Elizondo and Snow explained that the performance regression could be reduced to around 2%. The question they posed to the assembled developers in the audience was whether this was an “acceptable” performance regression – and, if not, what would be?

Reception

The proposal was greeted with a mix of curious interest and healthy scepticism. There was agreement that certain aspects of the proposal would reach wide support among the community, and consensus that a performance regression of 1-2% would be acceptable if clear benefits could be shown. However, there was also concern that parts of the proposal would change semantics in a backwards-incompatible way.

Pablo Galindo Salgado, Release Manager for Python 3.10/3.11 and CPython Core Developer, worried that all the benefits laid out by the speakers were only potential benefits, and asked for more specifics. He pointed out that changing the semantics of reference-counting would be likely to break an awful lot of projects, given that popular third-party libraries such as numpy, for example, use C extensions which continuously check reference counts.

Thomas Wouters, CPython Core Developer and Steering Council Member, concurred, saying that it probably “wasn’t possible” to make these changes without changing the stable ABI. Kevin Modzelewski, a maintainer of Pyston, a performance-oriented fork of Python 3.8, noted that Pyston had had immortal objects for a while – but Pyston had never made any promise to support the stable ABI, freeing the project of that constraint.

The 2022 Python Language Summit: A per-interpreter GIL

“Hopefully,” the speaker began, “This is the last time I give a talk on this subject.”

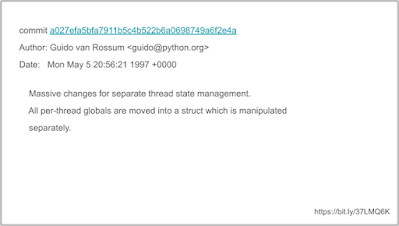

“My name is Eric Snow, I’ve been a core developer since 2012, and I’ve been working towards a per-interpreter GIL since 2014.”

In 1997, the PyInterpreterState struct was added to CPython, allowing multiple Python interpreters to be run simultaneously within a single process. “For the longest time,” Snow noted, speaking at the 2022 Python Language Summit, this functionality was little used and little understood. In recent years, however, awareness and adoption of the idea has been spreading.

Multiple interpreters, however, cannot yet be run in true isolation from each other when run inside the same process. Part of this is due to the GIL (“Global Interpreter Lock”), a core feature of CPython that is the building block for much of the language. The obvious solution to this problem is to have a per-interpreter GIL: a separate lock for each interpreter spawned within the process.

With a per-interpreter GIL, Snow explained, CPython will be able to achieve true multicore parallelism for code running in different interpreters.

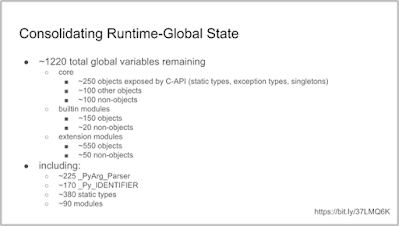

A per-interpreter GIL, however, is no small task. “In general, any mutable state shared between multiple interpreters must be guarded by a lock,” Snow explained. Ultimately, what this means is that the amount of state shared between multiple interpreters must be brought down to an absolute minimum if the GIL is to become per-interpreter. As of 2017, there were still several thousand global variables; now, after a huge amount of work (and several PEPs), this has been reduced to around 1200 remaining globals.

“Basically, we can’t share objects between interpreters”

– Eric Snow

Troubles on the horizon

The reception to Snow’s talk was positive, but a number of concerns were raised by audience members.

One potential worry is the fact that any C-extension module that wishes to be compatible with sub-interpreters will have to make changes to their design. Snow is happy to work on fixing these for the standard library, but there’s concern that end users of Python may put pressure on third-party library maintainers to provide support for multiple interpreters. Nobody wishes to place an undue burden on maintainers who are already giving up their time for free; subinterpreter support should remain an optional feature for third-party libraries.

Larry Hastings, a core developer in the audience for the talk, asked Snow what exactly the benefits of subinterpreters were compared to the existing multiprocessing module (allowing interpreters to be spawned in parallel processes), if sharing objects between the different interpreters would pose so many difficulties. Subinterpreters, Snow explained, hold significant speed benefits over multiprocessing in many respects.

Hastings also queried how well the idea of a per-interpreter GIL would interact with Sam Gross’s proposal for a version of CPython that removed the GIL entirely. Snow replied that there was minimal friction between the two projects. “They’re not mutually exclusive,” he explained. Almost all the work required for a per-interpreter GIL “is stuff that’s a good idea to do anyway. It’s already making CPython faster by consolidating memory”.

The 2022 Python Language Summit: Python in the browser

Python can be run on many platforms: Linux, Windows, Apple Macs, microcomputers, and even Android devices. But it’s a widely known fact that, if you want code to run in a browser, Python is simply no good – you’ll just have to turn to JavaScript.

Now, however, that may be about to change. Over the course of the last two years, and following over 60 CPython pull requests (many attached to GitHub issue #84461), Core Developer Christian Heimes and contributor Ethan Smith have achieved a state where the CPython main branch can now be compiled to WebAssembly. This opens up the possibility of being able to run arbitrary Python programs clientside inside your web browser of choice.

At the 2022 Python Language Summit, Heimes gave a talk updating the attendees of the progress he’s made so far, and where the project hopes to go next.

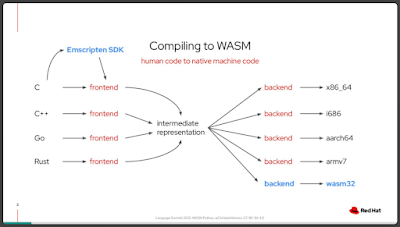

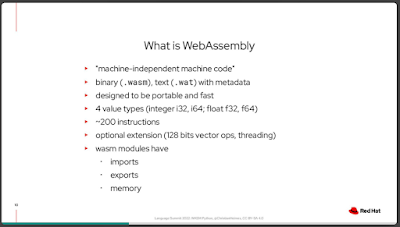

WebAssembly basics

WebAssembly (or “WASM”, for short), Heimes explained, is a low-level assembly-like language that can be as fast as native machine code. Unlike your usual machine code, however, WebAssembly is independent from the machine it is running on. Instead, the core principle of WebAssembly is that it can be run anywhere, and can be run in a completely isolated environment. This leads to it being a language that is extremely fast, extremely portable, and provides minimal security risks – perfect for running clientside in a web browser.

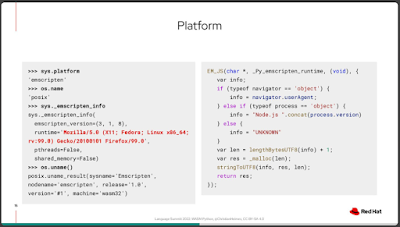

After much work, CPython now cross-compiles to WebAssembly using emscripten through the --with-emscripten-target=browser flag. The CPython test suite now also passes on emscripten builds, and work is going towards adding a buildbot to CPython’s fleet of automatic robot testers, to ensure this work does not regress in the future.

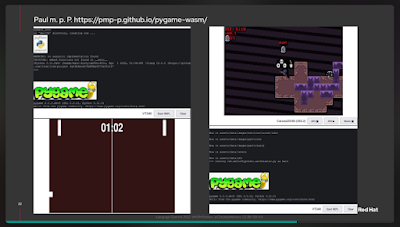

Users who want to try out Python in the browser can try it out at https://repl.ethanhs.me/. The work opens up exciting possibilities of being able to run PyGame clientside and adding Jupyter bindings.

Support status

It should be noted that cross-compiling to WebAssembly is still highly experimental, and not yet officially supported by CPython. Several important modules in the Python standard library are not currently included in the bundled package produced when --with-emscripten-target=browser is specified, leading to a number of tests needing to be skipped in order for the test suite to pass.

Nonetheless, the future’s bright. Only a few days after Heimes’s talk, Peter Wang, CEO at Anaconda, announced the launch of PyScript in a PyCon keynote address. PyScript is a tool that allows Python to be called from within HTML, and to call JavaScript libraries from inside Python code – potentially enabling a website to be written entirely in Python.

PyScript is currently built on top of Pyodide, a third-party project bringing Python to the browser, on which work began before Heimes started his work on the CPython main branch. With Heimes’s modifications to Python 3.11, this effort will only become easier.

These were a series of short talks, each lasting around five minutes.

Read the rest of the 2022 Python Language Summit coverage here.

Lazy imports, with Carl Meyer

Carl Meyer, an engineer at Instagram, presented on a proposal that has since blossomed into PEP 690: lazy imports, a feature that has already been implemented in Cinder, Instagram’s performance-optimised fork of CPython 3.8.

What’s a lazy import? Meyer explained that the core difference with lazy imports is that the import does not happen until the imported object is referenced.

Examples

In the following Python module,

spam.py, with lazy imports activated, the moduleeggswould never in fact be imported sinceeggsis never referenced after the import:And in this Python module,

ham.py, with lazy imports activated, the functionbacon_functionis imported – but only right at the end of the script, after we’ve completed a for-loop that’s taken a very long time to finish:Meyer revealed that the Instagram team’s work on lazy imports had resulted in startup time improvements of up to 70%, memory usage improvements of up to 40%, and the elimination of almost all import cycles within their code base. (This last point will be music to the ears of anybody who has worked on a Python project larger than a few modules.)

Downsides

Meyer also laid out a number of costs to having lazy imports. Lazy imports create the risk that

ImportError(or any other error resulting from an unsuccessful import) could potentially be raised… anywhere. Import side effects could also become “even less predictable than they already weren’t”.Lastly, Meyer noted, “If you’re not careful, your code might implicitly start to require it”. In other words, you might unexpectedly reach a stage where – because your code has been using lazy imports – it now no longer runs without the feature enabled, because your code base has become a huge, tangled mess of cyclic imports.

Where next for lazy imports?

Python users who have opinions either for or against the proposal are encouraged to join the discussion on discuss.python.org.

Python-Dev versus Discourse, with Thomas Wouters

This was less of a talk, and more of an announcement.

Historically, if somebody wanted to make a significant change to CPython, they were required to post on the python-dev mailing list. The Steering Council now views the alternative venue for discussion, discuss.python.org, to be a superior forum in many respects.

Thomas Wouters, Core Developer and Steering Council member, said that the Steering Council was planning on loosening the requirements, stated in several places, that emails had to be sent to python-dev in order to make certain changes. Instead, they were hoping that discuss.python.org would become the authoritative discussion forum in the years to come.

Asks from Pyston, with Kevin Modzelewski

Kevin Modzelewski, core developer of the Pyston project, gave a short presentation on ways forward for CPython optimisations. Pyston is a performance-oriented fork of CPython 3.8.12.

Modzelewski argued that CPython needed better benchmarks; the existing benchmarks on pyperformance were “not great”. Modzelewski also warned that his “unsubstantiated hunch” was that the Faster CPython team had already accomplished “greater than one-half” of the optimisations that could be achieved within the current constraints. Modzelewski encouraged the attendees to consider future optimisations that might cause backwards-incompatible behaviour changes.

Core Development and the PSF, with Thomas Wouters

This was another short announcement from Thomas Wouters on behalf of the Steering Council. After sponsorship from Google providing funding for the first ever CPython Developer-In-Residence (Łukasz Langa), Meta has provided sponsorship for a second year. The Steering Council also now has sufficient funds to hire a second Developer-In-Residence – and attendees were notified that they were open to the idea of hiring somebody who was not currently a core developer.

“Forward classes”, with Larry Hastings

Larry Hastings, CPython core developer, gave a brief presentation on a proposal he had sent round to the python-dev mailing list in recent days: a “forward class” declaration that would avoid all issues with two competing

typingPEPs: PEP 563 and PEP 649. In brief, the proposed syntax would look something like this:In theory, according to Hastings, this syntax could avoid issues around runtime evaluation of annotations that have plagued PEP 563, while also circumventing many of the edge cases that unexpectedly fail in a world where PEP 649 is implemented.

The idea was in its early stages, and reaction to the proposal was mixed. The next day, at the Typing Summit, there was more enthusiasm voiced for a plan laid out by Carl Meyer for a tweaked version of Hastings’s earlier attempt at solving this problem: PEP 649.

Better fields access, with Samuel Colvin

Samuel Colvin, maintainer of the Pydantic library, gave a short presentation on a proposal (recently discussed on discuss.python.org) to reduce name clashes between field names in a subclass, and method names in a base class.

The problem is simple. Suppose you’re a maintainer of a library,

whatever_library. You release Version 1 of your library, and one user start to use your library to make classes like the following:Both the user and the maintainer are happy, until the maintainer releases Version 2 of the library. Version 2 adds a method,

.fields()to BaseModel, which will print out all the field names of a subclass. But this creates a name clash with your user’s existing code, wich hasfieldsas the name of an instance attribute rather than a method.Colvin briefly sketched out an idea for a new way of looking up names that would make it unambiguous whether the name being accessed was a method or attribute.